The Other 199,800 Lines

What Karpathy built when he stopped simplifying

Andrej Karpathy distilled GPT to 200 lines of Python and called it the algorithmic essence of ChatGPT. He’s right. I read the whole thing and understood every line. It didn’t help me ship a single feature the next morning.

Then he built autoresearch. And that helped a lot.

A Decade of Distillation

Karpathy has been simplifying downward for ten years. micrograd, makemore, nanogpt, and now microgpt. Each project strips another layer of complexity until you’re left with the bare mechanism: embeddings, attention, a feed-forward network, backpropagation, and a training loop. 200 lines. 4,192 parameters. Runs in a minute on a MacBook.

Nobody does this better. His ability to take something that feels like magic and show you it’s just math is genuinely rare. microgpt is the best starting point for understanding LLMs that exists.

But then he says something in that post that I keep coming back to:

“None of [the production additions] alter the core algorithm and the overall layout, but they are what makes it actually work at scale.”

“What makes it actually work at scale,” tucked in after the real insight. A throwaway. I’ve been living in that sentence for two years.

Then He Built the Harness

A few weeks later, Karpathy released autoresearch: a framework where AI agents autonomously run ML experiments overnight. One GPU, one file, one metric. 46,000 stars in its first month.

Look at what he built. Not the algorithm. The system around it.

program.md: a markdown file that instructs the AI agent on what to do, what it can and can’t modify, and how to evaluate its own work. The human iterates on this file to improve the agent’s behavior. If you’ve worked with Claude Code skills or system prompts in production, you’ve built one of these. It’s a skill file.

Fixed 5-minute training budget: every experiment runs for exactly 5 minutes of wall clock time. Not because 5 minutes is optimal for ML research, but because it makes experiments comparable, prevents runaway compute, and lets you estimate throughput. About 12 experiments per hour. 100 overnight. That’s a factory tolerance: a fixed constraint that makes the output predictable.

val_bpb as the single metric: one number, lower is better. The evaluation harness lives in prepare.py, which agents cannot modify. The ground truth is immutable. The agent can change anything about the model, the optimizer, the architecture, but it cannot change how success is measured. That’s backpressure verification: the system defining its own boundaries.

The keep/discard loop: if val_bpb improves, keep the change and advance. If it doesn’t, revert. No negotiation. No “it feels better.” One metric, one decision. And the whole thing runs autonomously in a loop marked NEVER STOP, continuing until the human physically interrupts it.

If you’ve used any autonomous coding loop, you recognize this architecture immediately. Constrained scope, immutable eval, agent instructions in a markdown file, run until interrupted. The pattern keeps showing up because it works.

What This Tells Us

The person who distilled GPT to 200 lines, when he wanted to actually DO something with AI agents, built a harness. Not a better algorithm. Not a smarter model. A program.md, an immutable eval, a fixed budget, and a loop.

Most of the engineers on my team couldn’t explain how attention works. They don’t need to. They’re calling an API. The thing that determines whether their work succeeds or fails is everything around that API call: the eval framework, the failure handling, the skill files, the constraints.

microgpt teaches you what LLMs are. autoresearch teaches you what they need.

autoresearch got 46,000 stars. People are hungry for this. Not the algorithm, the system around it.

The Vocabulary Gap

What strikes me most is the vocabulary. Karpathy calls program.md “baseline instructions for AI agents.” I call it a skill file. He calls the 5-minute budget a “fixed time budget.” I call it a factory tolerance. He calls the keep/discard loop an “experiment loop.” I call it backpressure verification.

We’re describing the same discipline with different words. The emerging term is harness engineering. Karpathy doesn’t name it. He just builds it.

That’s actually the interesting gap. The practice is converging. Practitioners across different domains are arriving at the same patterns: constrained scope, immutable evaluation, human-authored instructions, autonomous loops. But we don’t have shared vocabulary yet. The same way “DevOps” gave a name to practices that already existed, harness engineering (or whatever it ends up being called) is waiting for its name to stick.

Where This Leaves Us

I wrote in What Outlives the Code that the durable output of engineering is shifting from code to the harness around it: documentation, skills, intent. autoresearch is the cleanest proof I’ve seen.

train.py changes every experiment. The agent rewrites it, discards it, rewrites it again. 100 times overnight. The code is disposable.

program.md persists. The human iterates on it across sessions. It captures judgment: what to try, what to constrain, what to measure. The harness outlives the code it governs.

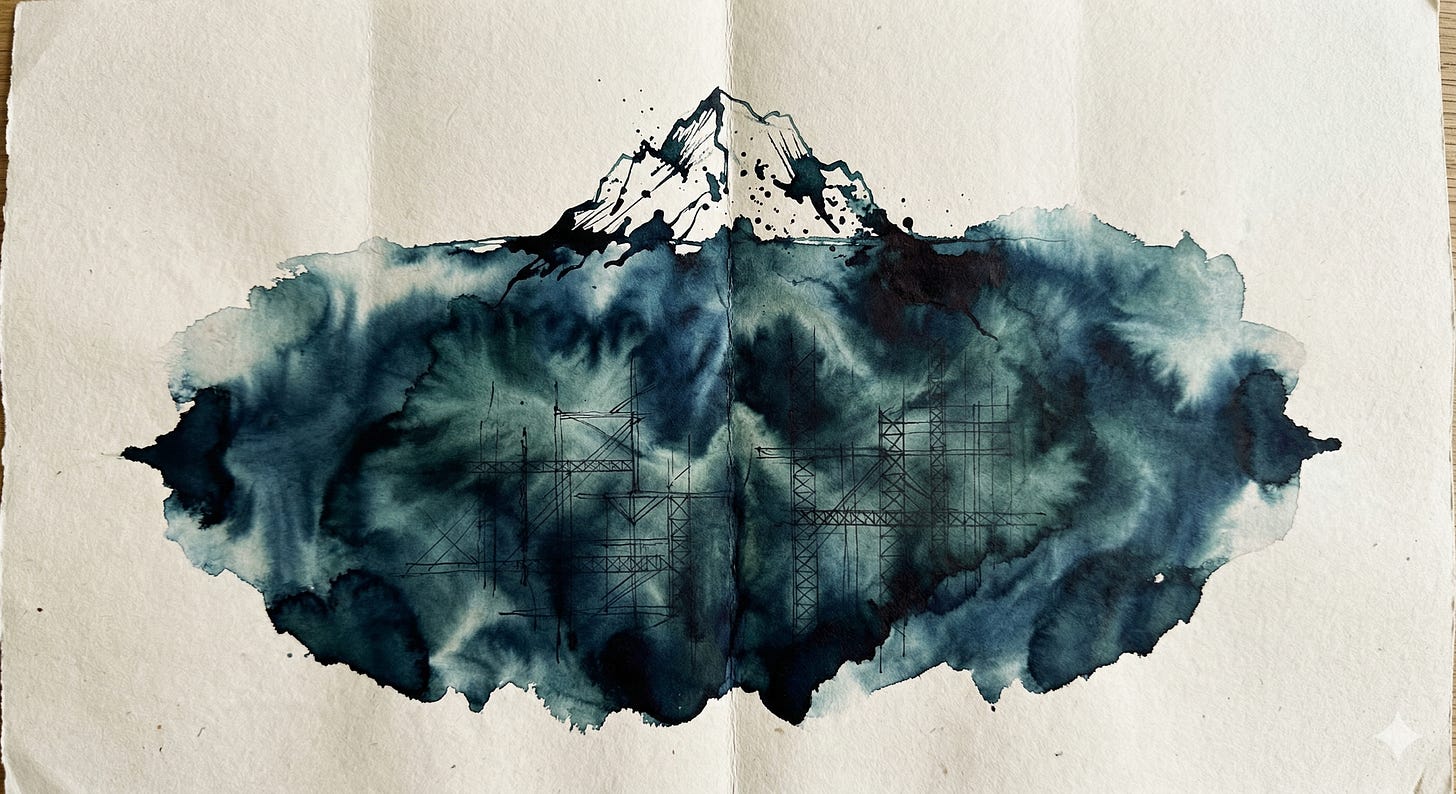

The algorithmic essence fits in 200 lines. The engineering essence is a program.md, an immutable eval, and the accumulated judgment about what to constrain. Even Karpathy builds it when it’s time to ship.

That’s not scaffolding. That’s a factory that builds itself.