Harness Engineering Has a Name Now

A maturity ladder for the infrastructure everyone's suddenly building.

September 2025. I’m building what I called an AI Goalie: an agent that reads production logs on a cron job, compares them against existing GitHub issues, updates those issues with new context if anything changed, hashes the log signatures so we’re not burning tokens re-processing things we’ve already seen, then prioritizes and researches the remaining issues so an engineer can pick up a solution rather than start from scratch.

It had a fixed structure. A lot of variability inside that structure. A hard constraint on token spend. And it needed to do real work without anyone watching it.

I didn’t have a name for what I was building. I just called it the harness.

Last week, OpenAI published a piece called “Harness Engineering.” Martin Fowler wrote it up. The Reddit thread hit the front page. The term is now mainstream.

That’s not a criticism. It’s a signal.

When an idea gets named by a big lab and written up by Fowler, it means the early adopters have moved past it and the mainstream is about to discover it. What usually follows: a wave of teams building Level 1 harnesses, calling it done, and wondering why the output isn’t reliable enough to trust.

Here’s what the levels actually look like, what goes wrong at each one, and what to build next.

The Harness Maturity Ladder

Level 0: Raw calls

You’re prompting the model directly. Maybe a system prompt, maybe not. The workflow lives in your head or in a Notion doc nobody reads. Outputs vary across runs in ways you can’t explain. You re-run when it goes wrong and move on.

What goes wrong: Everything is implicit. When the model behaves unexpectedly, you have no way to know if it’s the prompt, the input, the model version, or something you changed last week. You can’t improve what you can’t observe.

What to build next: Write down the stages. Even informally. Input preparation, model call, output handling. Name them. That’s the start of a Level 1 harness.

Level 1: Fixed pipeline

You’ve defined stages. Input goes in, processing happens, output comes out. Prompt templates are stable. You can describe what the harness does in one sentence. Someone else on the team can run it and get the same result.

This is where “harness engineering” will land for most teams picking up the term this year. It feels like a real system. It holds on the happy path.

What goes wrong: It breaks whenever input varies outside what you designed for. A log format changes slightly. An upstream API returns a new field. The prompt that worked for 80% of cases silently fails on the other 20% and you find out from a user, not a monitor. Level 1 harnesses are brittle because they assume the world is stable.

What to build next: Add constraints. Token budgets. Input validation before the model call. Output schema enforcement after. Start treating the model as a component with operating costs, not an oracle.

Level 2: Constrained and efficient

The Goalie lived here. Hashing log signatures to avoid reprocessing content the model had already seen. Retrieval to pull only the relevant issue context into the prompt, not the entire backlog. A defined token budget that held under production load, not just in testing.

At this level you’ve stopped thinking about the model and started thinking about the system. Input preparation has opinions. The model call is one step in a pipeline with real infrastructure around it.

What goes wrong: The harness is efficient but not verifiable. You know it’s running. You don’t always know if it’s right. Output quality is assessed by someone reviewing the results after the fact, which means review becomes the bottleneck. You can run the harness overnight. You can’t review overnight output in the morning without losing the day.

What to build next: Design for self-verification. Ask: what does “done correctly” look like, and can the agent check that itself before it hands off?

Level 3: Self-verifying

This is the backpressure verification layer. The agent’s exit criteria are defined before the run starts, written so the agent can evaluate them. It knows what done looks like. It checks its own output against the criteria before producing the result.

This is what makes the Ralph Wiggum pattern work at scale. The PR is the shared artifact. The harness generates output you can evaluate with the same tools you use to evaluate human output. CI passes or it doesn’t. The criteria are explicit, not implicit.

What goes wrong: Teams implement this mechanically without thinking about what the criteria actually need to be. “Did the agent complete the task?” is not a good criterion. “Do the tests pass, does the schema validate, does the output match the expected shape?” is. Weak criteria mean the verification layer gives false confidence. The harness reports success. The output is wrong in ways the criteria didn’t cover.

Who reviews the reviewer?

What to build next: Treat your acceptance criteria like production code. Version them. Review them. They’re the specification the harness is working against. If they’re vague, the harness will find creative ways to satisfy them that don’t match your intent.

Level 4: Harness as infrastructure

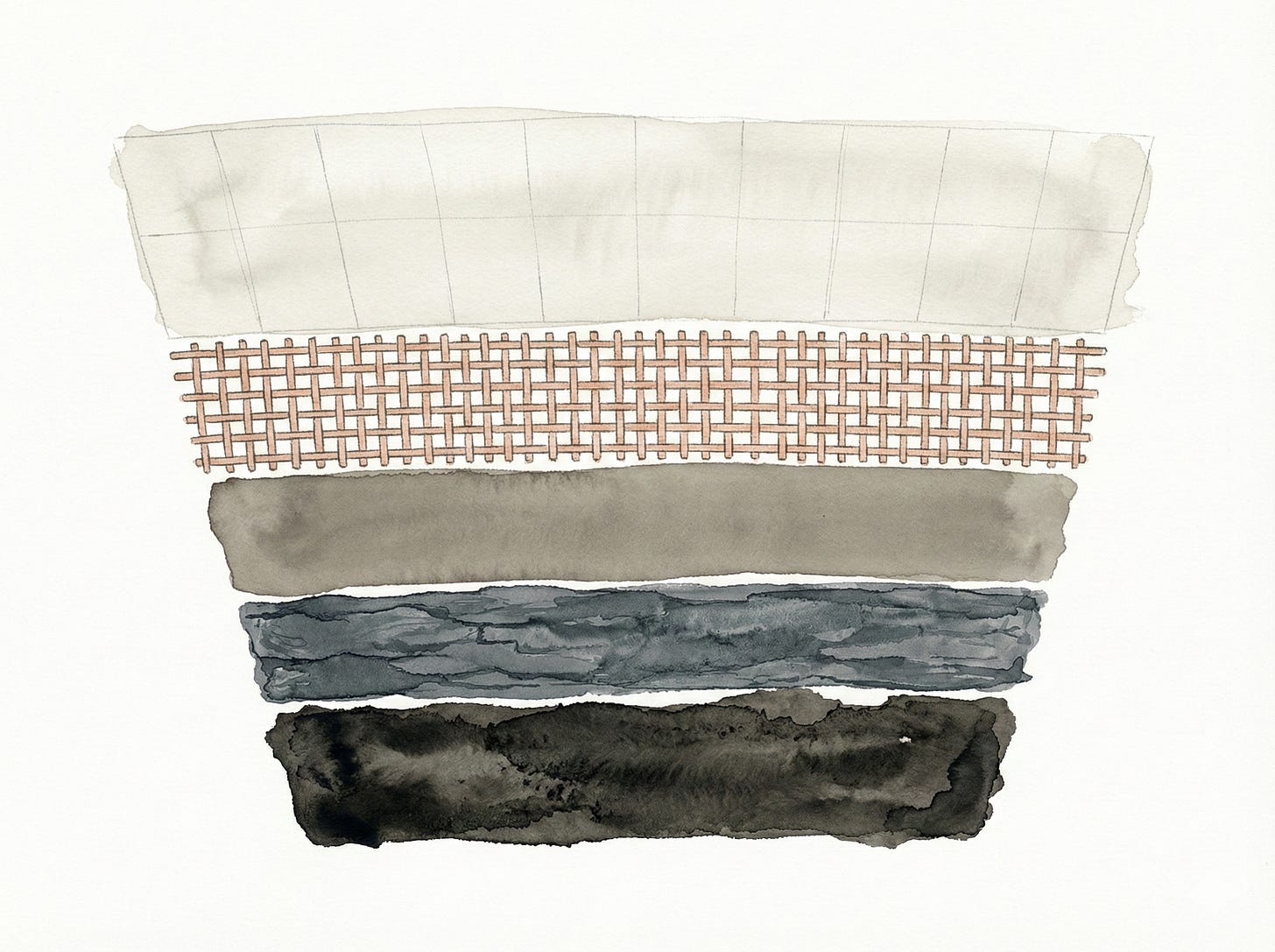

The harness is no longer a workflow around a model. It’s part of your data infrastructure. Skills as extractors. Medallion pattern. Attribution as configuration, not code. The model is nearly invisible. The harness has become the product.

When the BI team starts relying on harness output to make decisions, you’re here. When product asks “can we add a new dimension to that report?” and the answer is changing a config file rather than writing a new pipeline, you’re here.

What goes wrong: The harness becomes load-bearing infrastructure without the operational maturity to match. No versioning on skills. No rollback on config changes. No monitoring on output drift. The same things that bring down data pipelines bring this down, because it is a data pipeline, just with an LLM in the middle.

What to build next: Treat it like the infrastructure it is. Observability, versioning, rollback. The model is a dependency. Pin it.

What Teams Get Wrong When They Pick Up the Term

The biggest mistake is architectural: teams hear “harness” and build a wrapper. One function that calls the model, handles retries, maybe logs the output. They call it the harness. They ship it.

That’s scaffolding, not a harness. A harness has opinions about the whole workflow, not just the model call. It is the whole system, the whole software factory, people included.

The second mistake is scope. Teams build a harness for one task and stop. The value compounds when the harness handles a class of tasks, not a single one. The Goalie wasn’t useful because it could process one log file. It was useful because it could process any log file that matched the expected shape, consistently, without supervision.

The third mistake is skipping levels. Teams see the Level 4 case (harness as BI infrastructure) and try to build it from scratch. They end up with an overengineered Level 1 that collapses under its own complexity. The levels exist because each one builds the operational intuition you need for the next. You earn Level 4 by running Level 2 in production for long enough to understand what breaks.

The Bottleneck the Term Doesn’t Mention

The OpenAI piece frames harness engineering as a way to scale agent output. That’s right. What it undersells: the bottleneck moves, it doesn’t disappear. (Reminiscent of The Phoenix Project)

At Level 0 and 1, the bottleneck is output quality. The harness produces results you can’t trust, so you review everything.

At Level 2, the bottleneck is throughput. You can run more harness instances than you can review. You accelerate to the next constraint.

At Level 3 and 4, the bottleneck is criteria quality. The harness runs fast and self-verifies. But it only catches what the criteria cover. Writing good acceptance criteria is hard. It requires deep understanding of what correct output actually looks like across the full input distribution, not just the cases you’ve seen.

This is the work most teams aren’t thinking about yet. Not building the harness. Defining what the harness should be verifying against.

The harness got a name. The next problem is already in production, in the gap between your acceptance criteria and the edge cases they don’t cover.

Previously: The AI Goalie (September 2025), Ralph Wiggum for Teams, When Your Harness Becomes Your BI Team.