The Only Eval That Matters

GPT Image 1.5 won every benchmark. Users still prefer Nano Banana Pro.

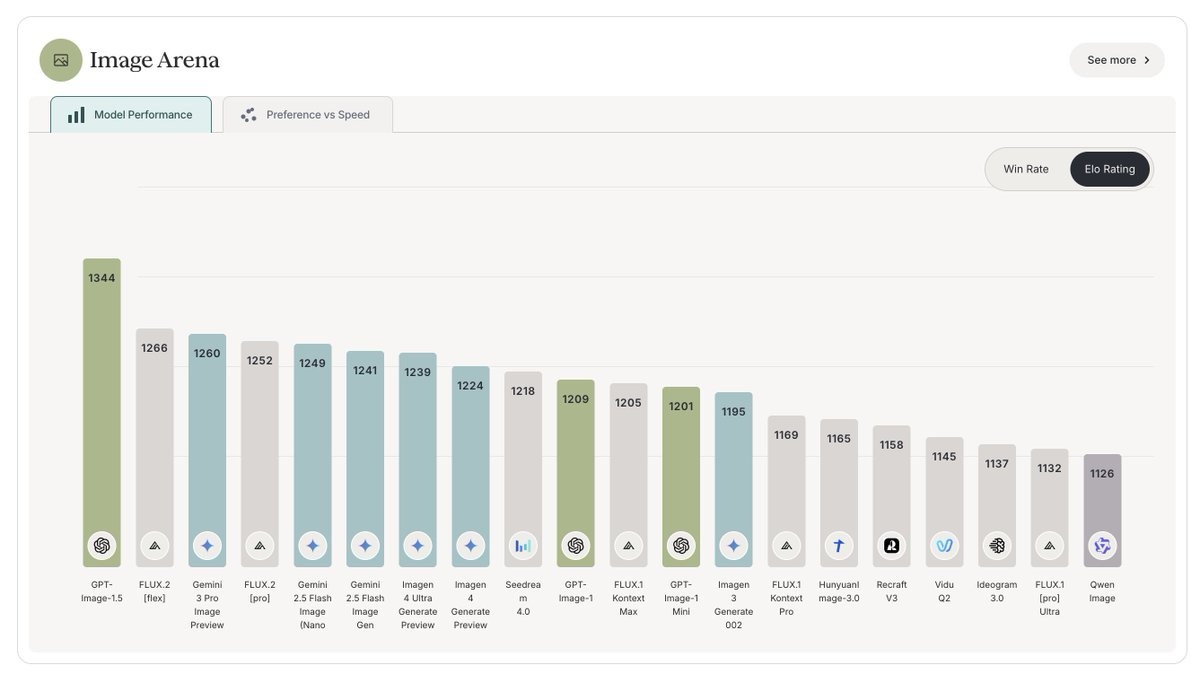

GPT Image 1.5 launched this week and immediately took #1 on every leaderboard that matters. LMArena: 1277. Design Arena: 1344. Artificial Analysis: 1272. Clean sweep.

Reddit, Twitter, and Discord all agree on something else: Nano Banana Pro is better.

This happens more than we admit. A model tops the benchmarks, ships to production, and users reach for something else. The gap between “won the eval” and “won the workflow” is where most AI projects die.

I’ve been thinking about why.

Benchmarks measure capability. Wallets measure value.

Arena votes answer “which do you prefer in this A/B comparison?” Spend answers “which one solves my actual problem well enough that I’ll pay?” Different questions entirely.

I learned this the hard way with agent work. Early on I chased benchmark leaders for customer service automation. Model X scored highest on intent classification. Model Y topped the summarization leaderboards. Stitched them together and shipped.

The agents worked. They just weren’t good. Not in ways the benchmarks captured.

Support leads could tell within a day which model was running. Not from accuracy metrics, from how the responses felt. The benchmark winner generated grammatically perfect, contextually appropriate responses that customers didn’t trust. The vibe was off.

Your evals > public evals

Generic benchmarks optimize for generic tasks. Your use case isn’t generic.

Image 1.5 probably generates objectively better images across the distribution of all possible prompts. But Nano Banana Pro might nail the specific prompt patterns creative professionals actually use. The benchmark measures the former. The credit card measures the latter.

The “good enough” threshold

Sometimes the #4 model at 1/10th the cost is the right answer. Sometimes it isn’t. Only your workflow tells you which.

When I evaluate models now, the first question isn’t “what’s the benchmark?” It’s “what would I pay for this output?” Not hypothetically. Actually.

Run 50 of your real prompts through the model. Look at the outputs. Ask yourself: would I pay for this? Would my customer?

If the answer is “yes, grudgingly” that’s a maybe. If the answer is “absolutely, this saves me 2 hours” that’s a winner. If you’re hedging, that’s a no.

The practical takeaway

Before adopting any model based on leaderboard position:

Run your actual prompts through it. Not synthetic benchmarks. Your prompts.

Track what you’d pay for the outputs. Real money, real decisions.

Compare against what you’re using now. Not against other benchmarks.

OpenAI shipped a genuinely better model this week. The benchmarks prove it. But better on benchmarks isn’t better in your workflow until your workflow says so.

The only evals that matters are your own.